Projects

-

A large amount of work focus on multi-spectral capture and analysis, but multi-spectral display still remains a challenge. Most prior works on multi-primary displays use ad-hoc narrow band primaries that assure a larger color gamut, but cannot assure a good spectral reproduction. Content-dependent spectral analysis is the only way to produce good spectral reproduction, but cannot be applied to general data sets or continuous image sequences. Wide band primaries, however, are better suited for assuring good spectral reproduction due to greater coverage of the spectral range, but have not been explored much.

-

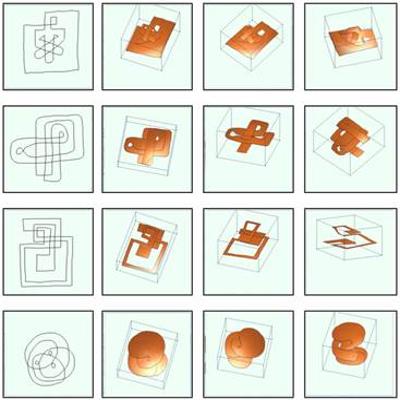

Self-Overlapping curves are boundaries of deformed disks with multiple overlaps. In this project we first find the deformation pattern of the disk, called the immersion, which produces a self-overlapping curve. Next, we propose a novel algorithm for morphing between two self-overlapping curves, such that each intermediate curve is also self-overlapping. Our morphing algorithm is based on an aesthetically good triangulation of the interior of a self-overlapping curve. This triangulation algorithm is based on our immersion algorithm.

-

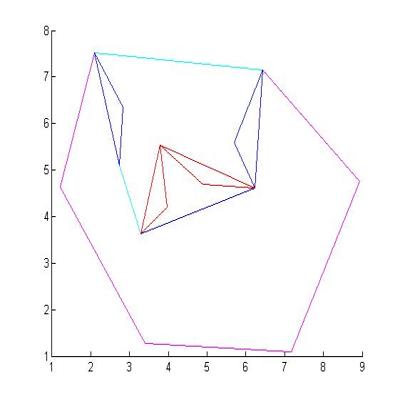

The 2D nesting problem is a famous problem in Computational Geometry where the objective is to fit multiple shapes into a fixed region. Simplistically put, it is similar to solving a jigsaw puzzle using just the geometry of the polygonal shapes. We approach this problem using a new geometric algorithm called the convex hull hierarchy. Our hull hierarchy exploits features of the polygons such as convex regions and concave regions termed as protrusions and cavities. We identify initial cavities using convex hulls of the polygons and then define protrusions based on cavities. This process is performed recursively and we finally have a tree structure where each node defines a new polygon which is either a cavity or a protrusion. We then use the hierarchy trees generated for each polygon to perform matching using criteria like polygon area, perimeter and tree isomorphism.

-

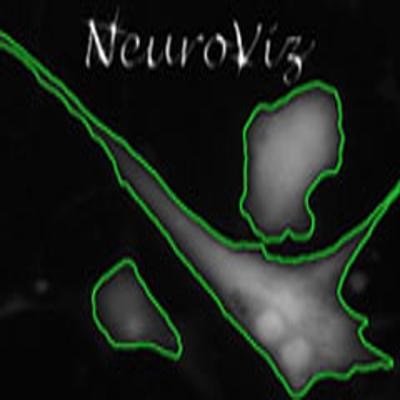

Cellular biology deals with studying the behavior of cells. Current time-lapse imaging microscopes help us capture the progress of experiments at intervals that allow for understanding of the dynamic and kinematic behavior of the cells. On the other hand, these devices generate such massive amounts of data (250GB of data per experiment) that manual sieving of data to identify interesting patterns becomes virtually impossible. In this project we propose an end-to-end system to analyze time-lapse images of the cultures of human Neuro Stem Cells (hNSC), that includes an image processing system to analyze the images to extract all the relevant geometric and statistical features within and between images, a database management system to manage and handle queries on the data, a visual analytic system to navigate through the data, and a visual query system to explore different relationships and correlations between the parameters. In each stage of the pipeline we make novel algorithmic and conceptual contributions. The entire system design is motivated by many different yet unanswered exploratory questions pursued by the Neuroscience community.

-

We have seen a tremendous advancement in the past decade by which very soon we will find cameras and projectors embedded in handheld devices like mobile phones and digital cameras. Most mobile devices nowadays are equipped with cameras. With the current advent of pico projectors, projection-enabled mobile devices are also getting popular. In December 2009, AT&T introduced the LG eXpo phone which has a pico projector attachment. Rumor has it that Apple is not far behind with possible offerings in iPhone 5. Nikon is embedding pico projectors in other hand-held devices like digital cameras. With this trend, very soon users would seek greater control over the resources available in a display or a camera. Such resources include color resolution, spatial resolution, spectral resolution and so on. For example, they would want to target resolution at the most important object in the scene during capture or display. Or, they would want to change the spectral sensitivity of the primaries based on the scene illumination condition – e.g. CMY for dark scenes, RGB for bright scenes and 6-primary for colorful scenes. Thus, we envision content-adaptive user-controllable capture and display devices to be increasingly popular in the future. Already, we have started to see some work in this direction including the first multi-modal camera built at our lab in UCI that offers adaptive spectral sensitivity for optimal signal-to-noise ratio (SNR). There are also recent works on content-adaptive parallax-barrier displays. These allow users much greater user control over the device and hence are much more flexible than before.

-

Imagine a workspace covered by a large number of displays that are not only purveyors of information, but active entities that can interact with the other components of the workspace (e.g. user, data, other devices). Imagine the user moving around such a workspace engaged in a remote collaborative task. Instead of the user consciously restricting his movement to be near the display, a display of the desired resolution and scale factor moves around with him. Imagine a group of users designing annotations on a large map. The users interact with the map in a shared manner, zooming in and out at different locations, without worrying about synchronizing their interactions not to conflict with each other while the map appropriately changed to cater to these multiple, maybe conflicting, cues. Imagine the user working with a display in one area of the workspace upgrades to a display of larger scale and resolution simply by using gestures to move displays from other areas of the workspace to the desired location. Imagine a wall in the workspace that is textured (for e.g. wall-paper) and the displays modify the images to adapt to these in a way that is perceptually faithful to the original image. We call such intelligent displays that can be seamlessly integrated with the environment so that both the user and the data find them at their service anywhere within the workspace, as ubiquitous displays.

-

Current graphics and visualization systems have to be built such that they can handle gigantic data sets like those from large scientific simulations including nuclear and power simulations, and geometric data sets such as digital models of defense and commercial equipment’s like aircrafts, ships and power-plants. Such large data sets cannot fit into the main memory of the machines or rendered interactively in current graphics systems. This project involves fundamental research in designing algorithms for efficient compression of these large data sets that enables fast decompression of the required portion of the data set in the main-memory and efficient interactive rendering of these data sets. This study explores three research directions to solve the problem of interactive walkthrough of gigantic data sets: syntactic compression, semantic compression, and access sensitive data layouts. Syntactic compression deals with compressing data bits. In this project, compression algorithms that exhibit properties like random-access decompression and stop-any-time decompression are studied. Semantic compression deals with representing objects with fewer primitives. In this context, this research studies parameterizable semantic compression algorithms that trades-off space for compression efficiency. Finally, optimal data layouts depend on application, and this study explores optimal data layout schemes of the 3D data sets on external memory for interactive walkthrough applications. This includes partitioning of the 3D data set using different metrics like normal vector deviation and spatial distance between objects, and computing the linear layout of these partitions in the external memory using graph algorithms.

-

Tiling multiple projectors is one of the most popular ways of building large area displays. These displays have been used for many applications such as visualization, entertainment, training, and simulation. Contemporary researchers envision future workspaces with ubiquitous pixels rendered by multi-projector displays of various scales and forms aiding users in collaboration, interface, and visualization. To enable seamless multi-projector displays, automated camera-based registration techniques that register the imagery coming from multiple projectors are required. This involves two steps. Geometric registration, which guarantees that collocated pixels from multiple projectors show the same content and Color calibration, which removes color seams coming from additional brightness in the overlap region and differences in color characteristics of the projectors. Both these problems become especially challenging when building displays with commodity projectors that show significant color variations and often need to mount short throw lenses on top of them to enable a compact set-up but leads to severe non-linear geometric distortions. Hence, most commercial vendors only allow high-end projectors, inhibiting the progress of the technology to the masses.

-

This is a collaborative project with Prof. Dan Aliaga's group in Purdue University. In this system we superimposes multiple projections onto an object of arbitrary shape and color to produce high resolution appearance changes. Our system produces appearances at a resolution higher than what is possible with a single projector and can change appearances at near interactive rates. We achieved several appearance edits including specular lighting, subsurface scattering, inter-reflections, and color, texture, and geometry changes on objects with different shapes and colors.

-

Study of contrast sensitivity of the human eye shows that our suprathreshold contrast sensitivity follows the Weber Law and, hence, increases proportionally with the increase in the mean local luminance. In this project, we effectively apply this fact to design a contrast-enhancement method for images that improves the local image contrast by controlling the local image gradient with a single parameter.

-

Triangle strip representation of a model has been traditionally used for efficient rendering. Vertex caching techniques to render these triangle strips improve coherence in memory access and boost the performance further. A generalized triangle strip is an edgeconnected sequence of non-repeating triangles. In order to correctly render such strips, non-alternating vertices might have to be repeated or “swap” commands have to be used, if available. Recently, due to the availability of larger vertex caches in the graphics accelerators, remarkable performance increases can be achieved using generalized triangle strips.

Finding a single generalized triangle strip covering the entire model, without modifying the model is NP-complete. Hence, traditionally in computer graphics, multiple triangle strips are used to represent a model. Recent works introduce a small number of additional triangles to find a single strip representation, and hence a linear ordering of the triangles of the entire model. Applications of triangle strip representations include generation of space filling curves and fundamental cycles on manifolds, as well as unfolding of triangle strips for origami.

-

We present simple rendering techniques implemented using traditional graphics hardware to achieve the effects of charcoal drawing. The effects include characteristics of charcoal drawings like broad grainy strokes and smooth tonal variations that are achieved by smudging the charcoal by hand. Further, we also generate the closure effect that is used by artists at times to avoid hard silhouette edges. All these effects are achieved using contrast enhancement operators on textures and/or colors of the 3D model.